Double-barreled questions: what they are and why they're a problem

"How satisfied are you with the speed and reliability of our service?" On the surface, that looks like a perfectly reasonable survey question. But read it again. What if the service is fast but crashes constantly?

.webp)

Contents

"How satisfied are you with the speed and reliability of our service?" On the surface, that looks like a perfectly reasonable survey question. But read it again. What if the service is fast but crashes constantly? Or reliable but painfully slow? A respondent who's thrilled with one dimension and frustrated with the other has no honest way to answer.

This is a double-barreled question—a single question that asks about two separate things at once. It's one of the most common survey design mistakes, and it does real damage to data quality. If you've ever looked at survey results and thought "these numbers don't quite make sense," double-barreled questions might be why.

How double-barreled questions work (and don't)

A double-barreled question packs two distinct concepts into one item, forcing respondents to give a single answer that's supposed to cover both. The problem is that the two concepts can have completely different answers.

Here's what happens in practice. A respondent reads "How satisfied are you with the speed and reliability of our service?" and thinks, "Well, speed is great—9 out of 10. But reliability? Maybe a 4." They need to give one number. So they average their feelings, pick whichever dimension feels more important, or just select something in the middle and move on.

No matter what they choose, the data is compromised. You can't tell which dimension drove the response. And when you average hundreds of these compromised responses, you get a number that doesn't accurately represent either speed or reliability. It represents an unknowable blend of both.

Why these questions slip through

Double-barreled questions are surprisingly easy to write without noticing. They tend to emerge when survey designers try to be efficient, covering more ground with fewer questions. The instinct makes sense. Nobody wants a 40-question survey. But the shortcut of combining questions isn't really a shortcut—it just moves the cost from survey length to data quality.

They also appear when the designer mentally groups related concepts. "Speed and reliability" feel like they belong together because they're both aspects of service performance. "Friendly and helpful" seem like a natural pair because they're both positive qualities. But "related" doesn't mean "interchangeable," and treating them as one introduces ambiguity every time.

A third source is internal review processes. Someone reads a draft survey and says, "Can we also ask about X?" Rather than adding a new question, the designer tacks the new concept onto an existing one. The question gets longer, but the response format stays the same—one answer for two ideas.

How to spot them

The simplest test: read the question and ask whether a respondent could reasonably have different answers for different parts of it. If yes, it's double-barreled.

Additionally, watch for these telltale patterns:

The "and" connector. "How satisfied are you with the quality and price of our product?" Quality and price are independent dimensions. A customer might love the quality and resent the price.

The "or" connector. "Do you find our app easy to navigate or confusing?" This forces respondents to pick one characterization, but navigation might be easy in some sections and confusing in others.

Compound adjectives. "Is our support team knowledgeable and responsive?" Knowledgeable and responsive are separate traits. A team can be deeply knowledgeable but take three days to respond.

Embedded assumptions. "How helpful was the training you received during onboarding?" assumes the respondent received training. If they didn't, the question is unanswerable—it's asking about a specific experience and its quality simultaneously.

Multiple actions. "How often do you read and share our newsletter?" Reading and sharing are separate behaviors with potentially very different frequencies. Someone might read every issue and never share a single one.

The real cost to your data

The damage from double-barreled questions goes beyond individual responses. It undermines your entire analysis in several ways.

You can't identify which dimension needs attention. If respondents rate "speed and reliability" poorly, what do you fix? You don't know whether the problem is speed, reliability, or both. The combined question gives you a signal that something's wrong but withholds the specificity you need to act.

Averages become meaningless. When respondents are averaging two different feelings into one number, the aggregate average of those averages is twice removed from reality. A mean score of 3.5 on "speed and reliability" could mean most people rate both around 3.5, or it could mean speed averages 4.8 and reliability averages 2.2. You can't tell.

Comparisons break down. If you're comparing satisfaction across customer segments, products, or time periods, double-barreled questions make it impossible to know which dimension is driving the differences. Segment A might score higher because of speed, while Segment B scores lower because of reliability, but the combined question hides this entirely.

You waste respondent goodwill. Survey fatigue is real, and forcing people to answer questions they can't accurately respond to is frustrating. Respondents notice when a question doesn't fit their experience, and that frustration can reduce engagement with the rest of the survey.

How to fix them

The solution to fixing double-barreled questions is almost always the same: split the question in two.

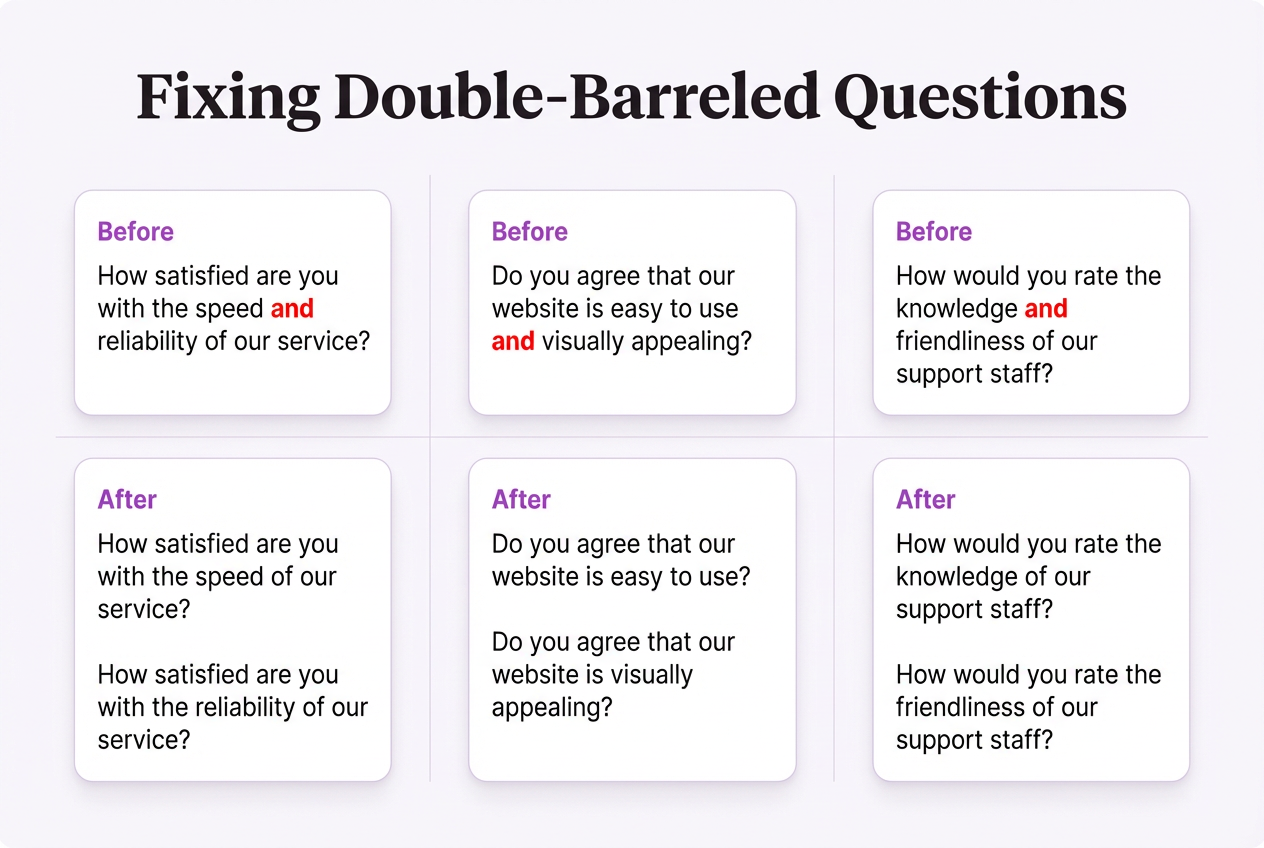

Before:

"How satisfied are you with the speed and reliability of our service?"

After:

"How satisfied are you with the speed of our service?"

"How satisfied are you with the reliability of our service?"

Two questions instead of one. Each is clear, answerable, and produces data you can act on independently. The survey gets slightly longer, but the data gets significantly better.

If you're worried about survey length, the answer isn't to combine questions—it's to prioritize. Ask yourself which dimensions actually matter for the decisions you'll make. Cut the ones that don't. A shorter survey with clean single-topic questions beats a longer survey padded with ambiguous compound ones.

When compound questions seem unavoidable

Occasionally, the two concepts really are inseparable in your respondents' minds. "How satisfied are you with your work-life balance?" technically combines "work" and "life," but most people experience them as a single construct. In these cases, the compound question works because respondents interpret it as one thing, not two.

The test is whether your respondents would naturally see the concepts as distinct. If you asked "How's the food?" at a restaurant, nobody would object that you've combined taste, temperature, and presentation into one question. People think of "the food" as a unified experience. But "How's the food and service?" crosses the line, because people routinely have different opinions about each.

Real-world examples: before and after

Here are five double-barreled questions rewritten as clean, single-concept alternatives:

Before: "Do you agree that our website is easy to use and visually appealing?"

After: "How easy is our website to use?" + "How visually appealing is our website?"

Before: "How would you rate the knowledge and friendliness of our support staff?"

After: "How knowledgeable is our support staff?" + "How friendly is our support staff?"

Before: "Are you satisfied with the variety and quality of products available?"

After: "How satisfied are you with the variety of products available?" + "How satisfied are you with the quality of products available?"

Before: "Would you recommend our company to friends and family?"

After: This one is actually fine—"friends and family" functions as a single audience in respondents' minds, not two separate groups to consider independently.

Before: "How often do you use our mobile app to check your account balance or make payments?"

After: "How often do you use our mobile app to check your account balance?" + "How often do you use our mobile app to make payments?"

Building better habits

Double-barreled questions persist because they feel natural to write. So, the fix isn't a one-time edit—it's a habit change. Here's how to build it:

Review every "and" in your survey. Treat every conjunction as a flag. Some will be fine (compound subjects like "friends and family"). Many will reveal a double-barreled question hiding in plain sight.

Have someone outside the project review the survey. Fresh eyes catch compound questions that the designer—who knows what they meant—will miss. Ask your reviewer: "Could someone have different answers for different parts of this question?"

Test with real respondents. During pilot testing, ask a few participants to think aloud as they answer. If they pause, frown, or say "Well, it depends which part you mean," you've found a double-barreled question.

Create a pre-launch checklist. Add "check for double-barreled questions" as a formal step in your survey development process, alongside checking for balanced scales, logical skip patterns, and mobile formatting. When it's part of a checklist, it's harder to forget. Over time, you'll start writing single-concept questions by default rather than catching the compound ones after the fact.

Watch for them in questions you didn't write. Double-barreled questions show up in survey templates, questionnaire databases, and "best practice" guides with alarming regularity. Just because a question appears in a professionally designed template doesn't mean it's well-designed. Apply the same scrutiny to borrowed questions that you'd apply to your own.

When splitting isn't enough

Occasionally, you'll discover that a double-barreled question is actually masking a deeper design problem. Splitting "How satisfied are you with the quality and timeliness of our deliverables?" into two questions is the right mechanical fix. But it also raises a strategic question: are both dimensions equally important to measure in this survey?

Sometimes the answer is no. Maybe timeliness is the critical issue you're investigating, and quality was only included because the survey designer thought of it while writing the timeliness question. In that case, the right fix isn't just splitting—it's cutting the less relevant dimension entirely and using that survey real estate for something more targeted.

Double-barreled questions are often a symptom of unclear survey objectives. When you know exactly what you're trying to measure and why, each question has a focused purpose that naturally prevents the "while we're at it" impulse to pile concepts together.

The effort of catching and splitting double-barreled questions pays dividends in data you can trust, analysis you can act on, and survey respondents who don't have to struggle to tell you what they think.